2025

Healthcare Technology

Product & Systems Design

Background

FirstVoice connects iPhone SOS with an adaptive AAC system designed for medical emergencies. When speech becomes unreliable, the interface surfaces medical context, narrows symptom selection, and structures communication between the user and first responders. The focus was reducing friction when clarity is most critical.

Core problem

Emergency response assumes verbal communication. For stroke survivors and individuals with aphasia, speech often breaks down under stress. Existing AAC tools are built for daily conversation, not triage. Medical details are buried, symbol boards are too broad, and responders lack immediate context. Delays happen where speed matters most.

Research

Stroke and AAC research, along with user interviews, revealed a gap between everyday communication tools and emergency needs.

Pain points

Large icon grids increase search time under stress

Medical history is not immediately visible

Manual navigation slows symptom reporting

Users are unsure when the system is listening

User goals

Communicate symptoms quickly

Surface condition and medication details automatically

Reduce decisions during high-stress moments

Trust that the system is working

Early Structure

Wireframes focused on reachability and speed. Navigation moved to the bottom for one-handed use. Medical information was consolidated into a single scannable card. The listening state became the primary surface. These structural decisions established clarity before visual refinement began.

Testing Before Development

Comparative testing was conducted before finalizing the core features. Users struggled to reach top navigation and hesitated when unsure whether the system was listening. A darker red palette increased urgency. Minimal animations improved accessibility. Adding pulsing feedback clarified system state, while secondary tones maintained seriousness without escalation. These findings directly shaped the final feature set.

Smart Alert Beacon

When SOS is activated, FirstVoice triggers a loud, location-based alert tone that guides responders to the user. The goal was physical orientation first, interface second. The system anchors emergency response in the real environment before digital interaction begins.

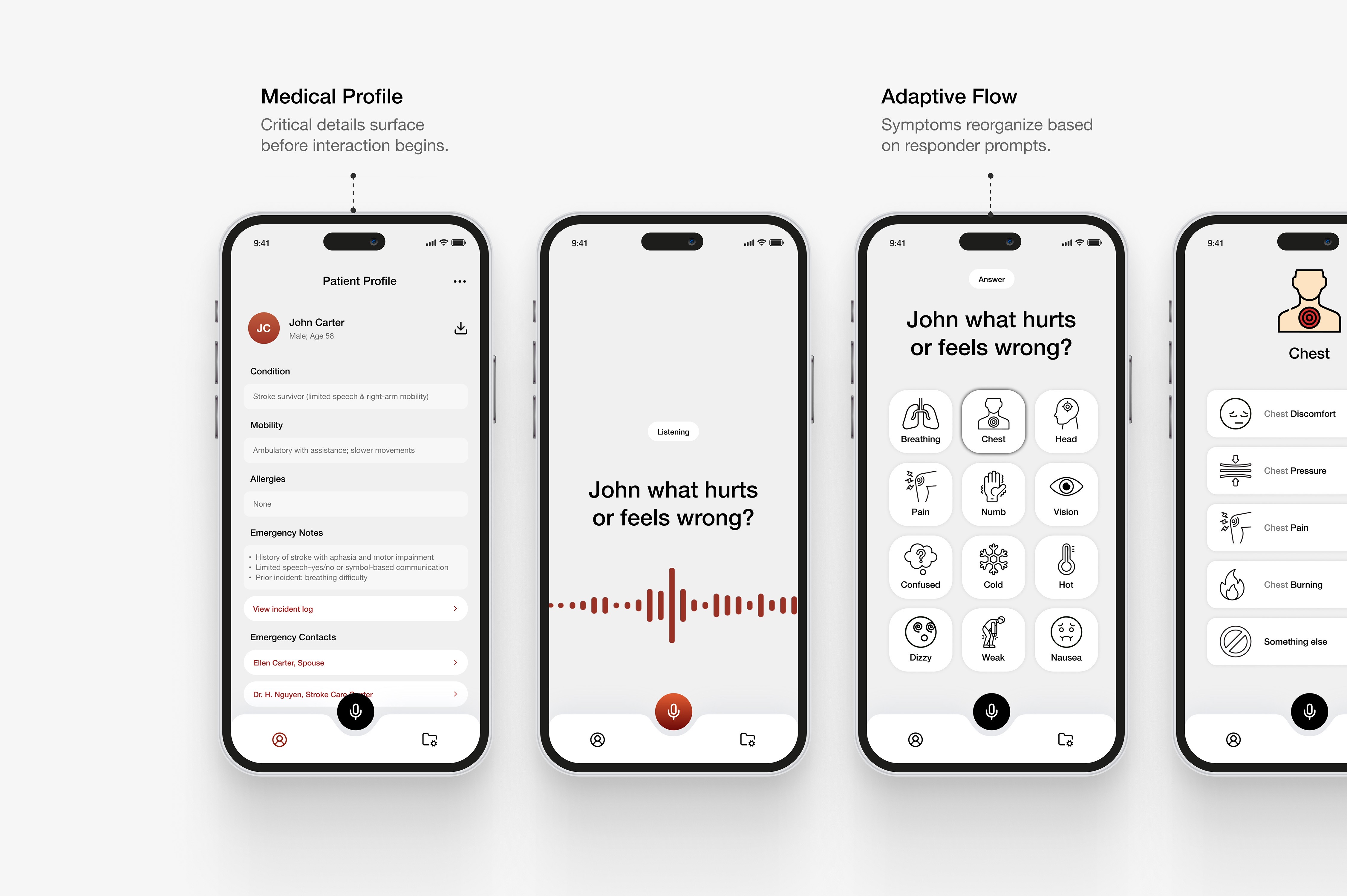

Dynamic Info Card

A structured medical profile surfaces automatically and reads aloud critical details — condition, communication method, mobility, allergies, and emergency contacts. Instead of requiring explanation, the system provides context immediately. This reduces responder uncertainty and speeds up decision-making.

Adaptive Listening Mode

Adaptive Listening narrows the icon board in real time based on responder questions. Voice recognition filters and reorganizes options around the active prompt, allowing the user to confirm within a focused set instead of scanning a full grid. The interaction follows real triage flow, reducing search time and cognitive load.

If responder input becomes inactive, the system prompts a brief check-in before revealing the incident summary. Once complete, a structured report is generated and accessible via QR code, supporting documentation and handoff beyond the immediate exchange.

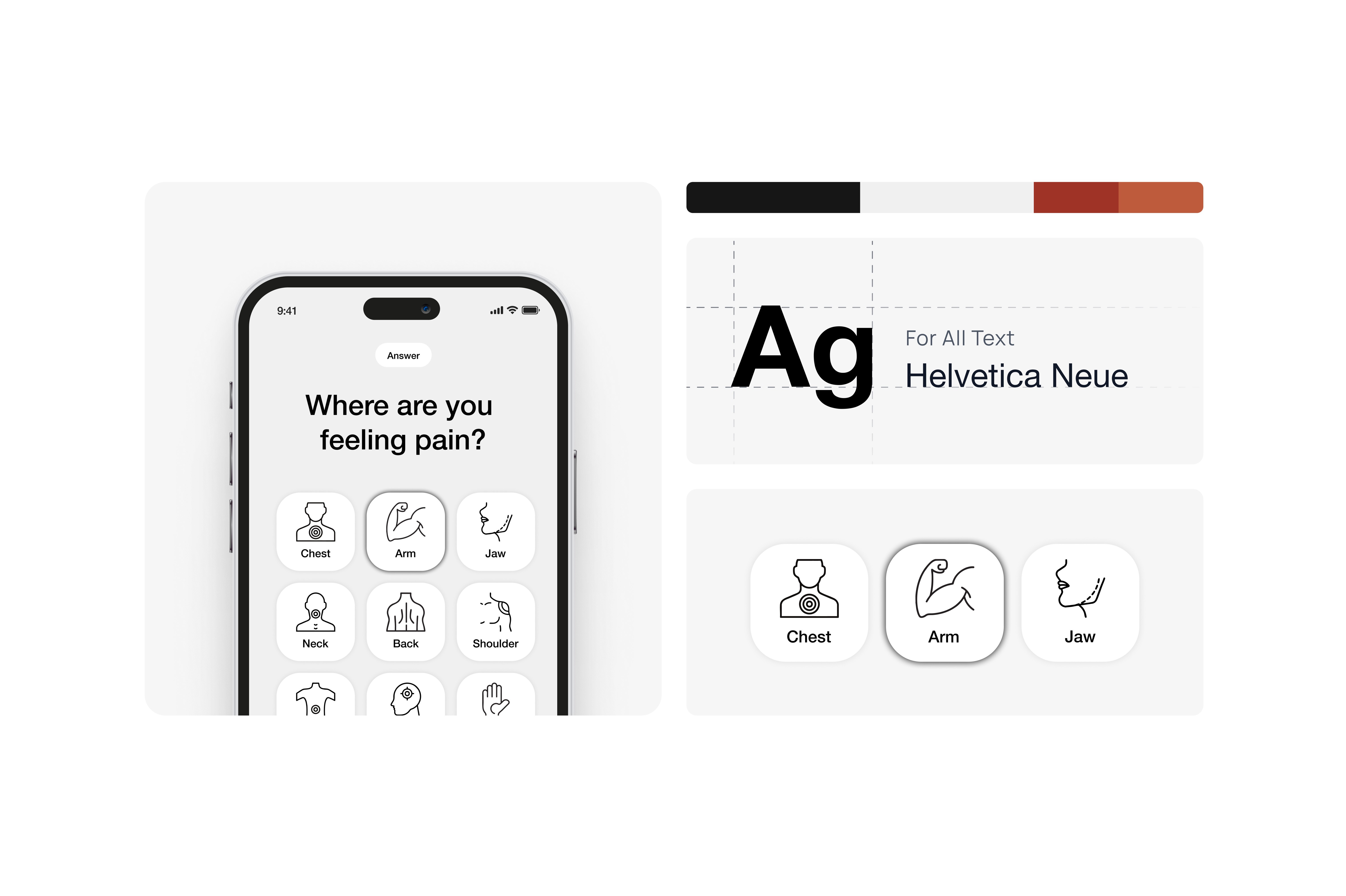

Design System

The design system prioritizes legibility and calm clarity. Typography reinforces hierarchy and large touch targets. The color palette signals urgency without increasing stress. Iconography was developed as a categorized set organized by symptom clusters, reducing scan time and supporting rapid recognition. Visual decisions support function, not decoration.

Reflection

FirstVoice reinforced that emergency design is defined by constraint. Interaction speed, visible hierarchy, and emotional restraint are functional decisions. By narrowing choices and structuring communication around real-world assessment patterns, the system supports clarity when speech is least reliable.